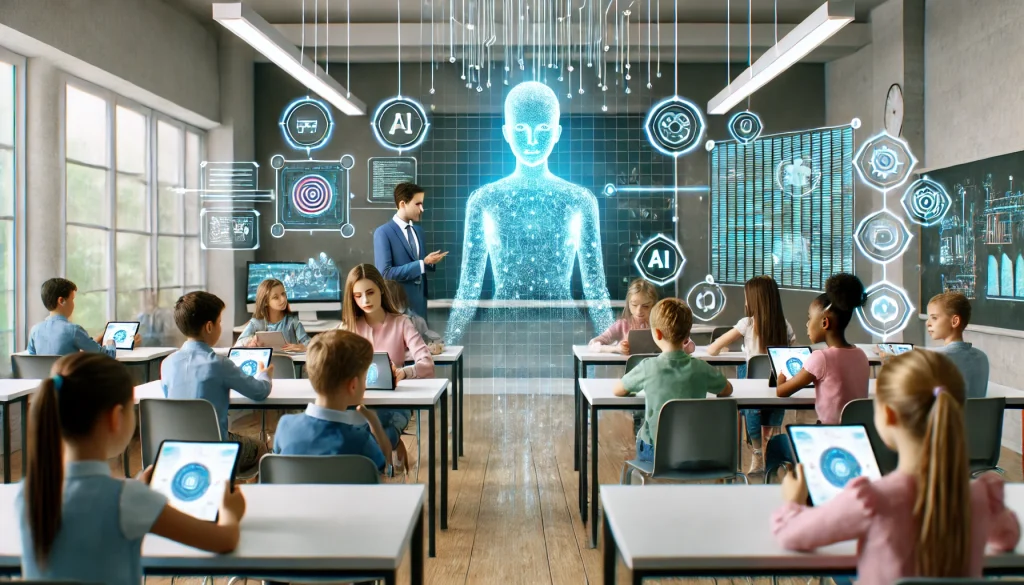

Artificial intelligence is rapidly transforming the educational landscape, offering new opportunities for personalized learning, efficient grading, and adaptive teaching tools. However, as AI systems become more integrated into schools, a range of ethical challenges of AI in classrooms has emerged. These issues touch on student privacy, fairness, transparency, and the evolving role of teachers and technology in shaping young minds.

Understanding these concerns is crucial for educators, parents, policymakers, and technologists who want to ensure that AI is used responsibly and equitably in educational settings. This article explores the main ethical dilemmas, provides practical insights, and highlights ways to navigate the complexities of AI-driven learning environments.

For those interested in the intersection of artificial intelligence and STEM education, you may also find value in AI and STEM learning explained, which covers how technology is shaping modern classrooms.

Key Privacy Concerns in AI-Driven Classrooms

One of the most pressing ethical challenges of AI in classrooms is the issue of student privacy. AI-powered tools often collect and analyze vast amounts of data, including academic performance, behavioral patterns, and even biometric information. While this data can help personalize learning, it also raises questions about consent, data storage, and potential misuse.

- Data Collection: AI systems may gather sensitive information without students or parents fully understanding what is being collected or how it will be used.

- Data Security: Schools must ensure that student data is securely stored and protected from unauthorized access or breaches.

- Consent and Transparency: Clear policies are needed to inform families about data practices and to obtain meaningful consent.

Bias and Fairness in AI Educational Tools

Another significant concern involves bias and fairness in AI algorithms. These systems are only as objective as the data and assumptions behind them. If training data reflects existing inequalities or stereotypes, AI tools may unintentionally reinforce them, leading to unfair treatment of certain student groups.

- Algorithmic Bias: AI grading systems or adaptive learning platforms can favor or disadvantage students based on race, gender, socioeconomic status, or learning abilities.

- Transparency: Many AI systems operate as “black boxes,” making it difficult for teachers and students to understand how decisions are made.

- Accountability: When errors or unfair outcomes occur, it can be challenging to determine who is responsible—the developer, the school, or the AI itself.

Addressing these issues requires ongoing evaluation, diverse data sets, and open communication between educators and technology providers.

Impact on Teacher Roles and Student Autonomy

The increasing presence of AI in classrooms also raises questions about the evolving roles of teachers and the autonomy of students. While AI can automate administrative tasks and provide tailored instruction, it should not replace the human elements of teaching—such as empathy, mentorship, and critical thinking guidance.

- Teacher Oversight: Educators must remain actively involved in interpreting AI-generated insights and making final decisions about student learning.

- Student Agency: Overreliance on AI tools may limit opportunities for students to develop independence, creativity, and problem-solving skills.

- Professional Development: Teachers need ongoing training to effectively integrate AI into their practice while maintaining ethical standards.

Transparency and Explainability in Educational AI

For AI to be trusted in educational contexts, it must be transparent and explainable. Students, teachers, and parents should have access to clear information about how AI systems work, what data they use, and how decisions are reached.

- Explainable AI: Tools should provide understandable feedback and rationales for their recommendations or assessments.

- Open Communication: Schools should foster dialogue about the benefits and risks of AI, encouraging questions and feedback from all stakeholders.

- Ethical Guidelines: Adopting frameworks and best practices can help ensure responsible use of AI in education.

For a broader overview of how artificial intelligence is being applied in educational settings, the Wikipedia entry on artificial intelligence in education offers additional context and resources.

Balancing Innovation with Ethical Responsibility

As schools continue to adopt AI technologies, it is essential to balance innovation with ethical responsibility. This means prioritizing student well-being, promoting fairness, and ensuring that technology serves as a tool for empowerment rather than control.

- Policy Development: Educational institutions should establish clear policies for AI use, including guidelines for data privacy, bias mitigation, and accountability.

- Stakeholder Involvement: Involving students, parents, teachers, and community members in decision-making can help address concerns and build trust.

- Continuous Monitoring: Regularly reviewing the impact of AI tools allows schools to identify and address emerging ethical issues.

By fostering a culture of ethical awareness and proactive engagement, schools can harness the benefits of AI while minimizing potential harms.

Frequently Asked Questions

What are the main ethical concerns with AI in education?

The most significant issues include student privacy, data security, algorithmic bias, lack of transparency, and the shifting roles of teachers and students. Addressing these concerns requires clear policies, ongoing oversight, and open communication.

How can schools ensure fairness when using AI tools?

Schools can promote fairness by using diverse and representative data sets, regularly auditing AI systems for bias, and involving educators in interpreting AI-generated results. Transparency and explainability are also key to building trust.

What steps can educators take to protect student privacy?

Educators should inform students and families about what data is being collected, obtain meaningful consent, and ensure that all data is securely stored. Limiting data collection to what is necessary and regularly reviewing privacy practices are also important.